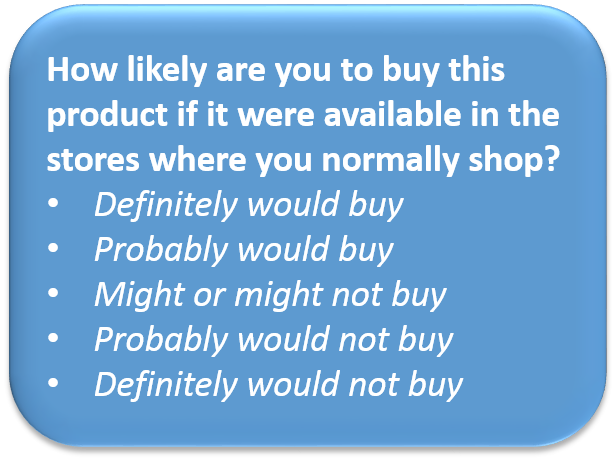

If I polled research professionals for their opinion of a question used in surveys where only 30% of those who said they definitely will do something then actually go on to do it, I’m sure their opinion would be that the question is horribly flawed.

Well, welcome to the 5 point purchase intent question!

It is documented that, when exposed to a concept for a new product, only about 30% of respondents, on average, who say “definitely will buy” will “convert”…that is, actually go on to buy the new product when it is launched. Beyond what is publicly documented, I ran the ESP model that competed with BASES for many years and I know this to be true.

Furthermore, the new product failure rate remains unacceptably high…80% is typically quoted…the models don’t seem to be helping.

And yet, maybe a billion dollars is spent each year making sales forecasts and launch decisions from models based on the sacrosanct but flawed 5 point purchase intent question.

However, thinking about this with my digital ad targeting hat on, lately I’ve been wondering if the conversion problem is in large part due to marketers taking a mass media approach to launching a new product rather than strictly the fault of the question itself.

Think about how a concept test, forecasting and launch process usually works. A supplier conducts the test, makes a forecast, and if positive the client launches the product usually with front-loaded mass media. The supplier’s focus is on the accuracy of the aggregated forecast, not on the media strategy. The supplier gets re-involved at month 9 or so to begin the forecast validation process. The process was invented in the traditional analog mass media days, yet advertising technology has changed A LOT, so perhaps the process needs to be reinvented as well.

Here is what I am beginning to work on. I want to profile concept acceptors using digitally targetable profiling variables and then use programmatic means to address messages and offers to those who are most likely to be concept acceptors AT SCALE (CAAS), that is, all cookied users who are modeled to look like concept acceptors.

So let’s use ad targeting to redeploy dollars to CAAS. It is conceivable that this would increase reach from, say, 50-75% against CAAS and double the average message frequency. Do you think that the 30% “conversion rate” would increase? Of course, it has to. Could it get to 50%? I believe so. Would that be the difference between a successful launch and a failure? Well, the math says that year one trial might increase by 2-4 percentage points and yes, that could make the difference between success and failure in many cases.

Most assume that the key value from a purchase intent question in a concept test study is that it enables a sales forecast. I suggest we challenge that as well. A Moneyball new product forecasting approach needs a database of many launch experiences with many profiling and classification variables but does not necessarily require purchase intent. The first version of the ESP model in the mid-1970s was based on published work from Professor Jerry Eskin, and it did not use a purchase intent question, it used category or segment annual penetration. Par share approaches like the Hendry model or the Zipf distribution do not need purchase intent.

So basically, what I am saying is that the purchase intent question should be thought of as the basis for a targeting model that targets CAAS and so we should judge its value that way, especially if Moneyball-style means of forecasting performance have been created.

Now, the next issue becomes is there a BETTER model for identifying high potential customers and then scaling via lookalike modeling vs. using the 5 point purchase intent question alone? I think there must be. First there are better questions: I prefer constant sum (allocating 10 points across brands under consideration and the Juster scale (an expanded version of purchase intent with words and probabilities…for example the top box is “almost certain, 99 in 100% chance). Secondly, as with any ad targeting model, we would bring in other variables if they have predictive value. For example, we might find that users who download recipes are more likely to try any new meal food product, irrespective of stated purchase interest.

In a digital ad targeting way of thinking, alternative targeting models are testable via a bakeoff on the different methods…the value of each version of a purchase interest question can be assessed in terms of the targeting model it enables which produces the highest rate of triers.

So how would I re-engineer the new product evaluation process?

- Create a Moneyball model for forecasting

- Commit to creating a user-level predictive model for triers based on purchase interest and other profiling variables

- Use the measure of purchase interest that permits the best CAAS targeting model

- Bring in other variables to fully flesh out the targeting model

- Track the launch not only in terms of traditional consumer and sales measures but use campaign testing approaches to refine the CAAS profile and targeting strategies in flight

Joel – exactly!

Although you’ve found a cunning suggestion for preserving the PI question (use it, not to quantify potential sales but to find prospects), my aversion to the question is so strong that I would still not use it. I’d much prefer you constant sum suggestion. It’s the one ‘old’ question that has survived the test of time really well.

Seems you want to keep the question when you may not need to. The empirical question is, if you could collect such data, how much does it improve your ability to generate results than without it. im not sure you could get her the data from all potential targets fast enough to influence decisions in real time. In essence, can you get 90% of the way to finding a way to identify a target without doing the survey?

Hi Jack: The question you raise is whether or not concept testing, as it exists, is really necessary anymore and what would replace it? That is a question that upsets many suppliers who generate substantial revenue from it and many clients who know how to order up concept testing. On the other hand, it is exactly the right question for marketers to ask. I think it should be my next blog.

To launch a new product in market without having a good market strategy will lead to loss. Don’t you think a good market research and a entry strategy will always help a new product to succeed.Can I see some examples in this field and market research done on this. View market research reports http://thebusinessresearchcompany.com/our-research-31.html

What i don’t understood is in reality how you’re now not

really a lot more neatly-favored than you may be right now.

You are so intelligent. You know thus considerably in terms of

this subject, made me for my part consider it from a lot of numerous angles.

Its like men and women are not interested until it is one thing to do with Lady gaga!

Your own stuffs nice. All the time handle it up!