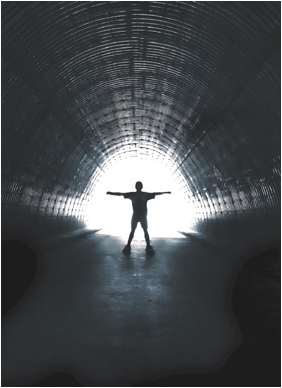

We’re starting to see the light at the end of the online data quality tunnel.

On June 9th , the ARF Online Research Quality Council (ORQC) presented detailed findings from an unprecedented US R&D project regarding online data quality, called “Foundations of Quality” (FoQ). Now that we’re into the 90 day action plan stage, I wanted to provide an update via my blog.

Industry leaders on the ORQC felt that the issues we uncovered fall into three broad buckets (illustrated with some of the high-leverage issues):

- Panel management

- Managing the sample source consistently since different panels produce different results

- Managing panelist longevity very closely since newer recruits give more favorable purchase intent

- Over time, migrating away from promising respondents and prospective panelists cash gifts for ad hoc surveys. (Those who take surveys because they want to share their opinions are proven to be better respondents from FoQ.)

- Sample management

- Setting guidelines for the minimum and maximum number of survey invitations in a month (3-10 completed interviews per month was associated with the highest quality data).

- Specifying what type of wording in the invitation is biasing and needs to be avoided (e.g. avoid, “we are looking for people who…)

- Pre and post-stratifying the data using longevity characteristics as well as demographics.

- Response quality

- Survey length restrictions that all will be asked to abide by (the number one source of inattentive respondent behavior in filling out the survey was length)

Members of the “industry solutions” and metrics committees have been divided up and assigned to one of these three buckets to tackle the issues shown plus others in that bucket. Each sub-committee has supplier and buyer representation. Each team is tasked with creating recommended industry practices, metrics, common vocabulary and other management tools by mid-late September so buyers and suppliers can partner to collect trustworthy online research results.

Equally critical to developing the right set of recommendations is consensus-building. If leading buyers and sellers of research do not agree on the rules of the road, if everyone tries to find their own solution, then any one player can poison the well for everyone else.

Permit me a personal story about that. A few weeks ago, I received an invitation from a reputable survey firm to participate in a 60 minute survey in exchange for a $10 cash gift (already two “no-no-s”!). After 25 minutes, I flunked a qualifying question, was terminated and received NO gift! Imagine how bad survey-company practice coupled with unreasonable client demand would turn off respondents forever to ANYONE’S survey invitations.

However, I AM optimistic that the ARF will achieve industry consensus. Many of the leading buyers (including Bayer, Capital One, Coke, ESPN, General Motors, Microsoft, Procter, Unilever), 17 leading panel companies and other research organizations all participated in the FoQ research and via the ARF continue to work collaboratively to craft a solution. The ARF is also working closely with all other industry associations via ACE (Association Collaborative Effort). It really is a remarkable industry collaboration.

If we stay on our timeline to provide a solution by September, and leading players all realize this is our shared future that we’re talking about, yes, we’re seeing the light at the end of the tunnel.

Interesting research. Thanks, Joel.